random_seed best_validation_score best_k test_set_score

0 0 0.7 5 0.846154

1 1 0.7 1 0.538462

2 2 1.0 13 0.692308

3 3 0.7 1 0.846154

4 4 0.9 5 0.769231

5 5 0.8 11 0.769231Supervised learning workflow

Model training and selection

Huanfa Chen - huanfa.chen@ucl.ac.uk

13/12/2025

Last week

- Framework of supervised learning

- Evaluation metrics for regression and classification

Objectives of this week

- Understand different workflow of supervised learning.

- Understand train-test split and train-validation-test split.

- Understand cross validation and its extensions

- Know when to use different model evaluation methods.

Supervised Learning

\[ \begin{aligned} (x_i, y_i) &\sim p(x, y) \text{ i.i.d.} \\ x_i &\in \mathbb{R}^n \\ y_i &\in \begin{cases} \mathbb{R}, & \text{(regression)} \\ \mathcal{Y} \text{ (finite set)}, & \text{(classification)} \end{cases} \\ \text{learn } f(x_i) &\approx y_i \\ \text{such that } f(x) &\approx y \end{aligned} \]

Challenges of training supervised models

- To select evaluation metrics (so that the model solves the right problem)

- To design workflow (so that the model generalises well and avoids overfitting)

- A robust workflow leads to an unbiased evaluation of the model’s generalisation performance

- It will select effective model hyperparameters (to avoid overfitting)

Various workflows

- Train-test split

- Train-validation-test split

- Cross-validation + test set

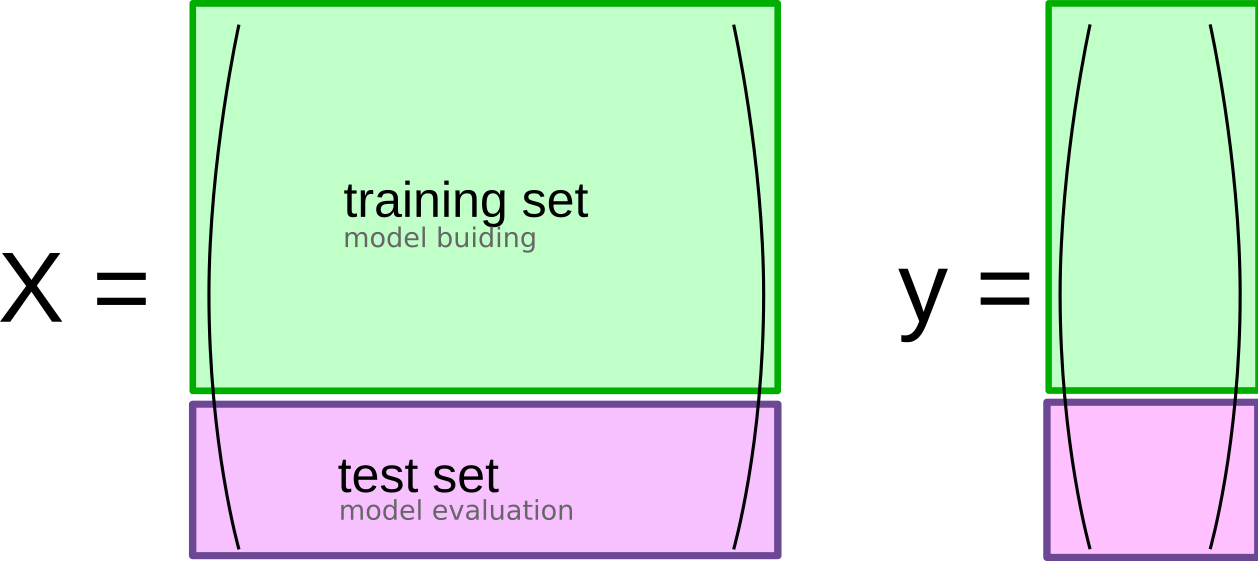

Train-Test split

Train-Test Split (usually 75/25)

Why Train-Test Split?

Statistics

- No train-test split

- Train and evaluate on whole data

- Estimation is key

- Low model complexity

Machine Learning

- Train-test split

- Train on part, evaluate on held-out part

- Prediction (Generalisation) is key

- High model complexity; overfitting risk

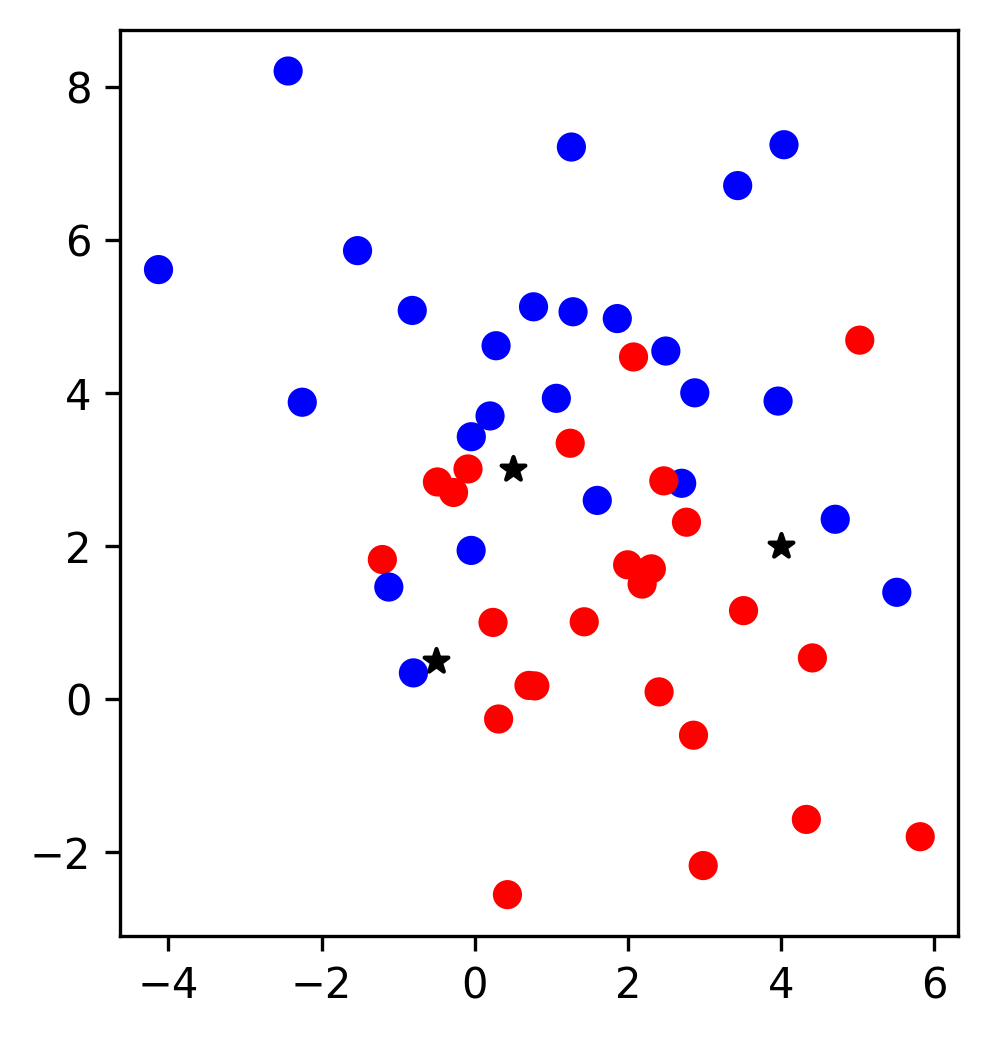

Example - K Nearest Neighbors (KNN)

- With a trained KNN, a new data point is classified by the majority label of its k nearest neighbours in the training set

KNN (k=1)

- When k=1, a new data point is labelled by its nearest neighbour

- Sensitive/Complex decision boundary

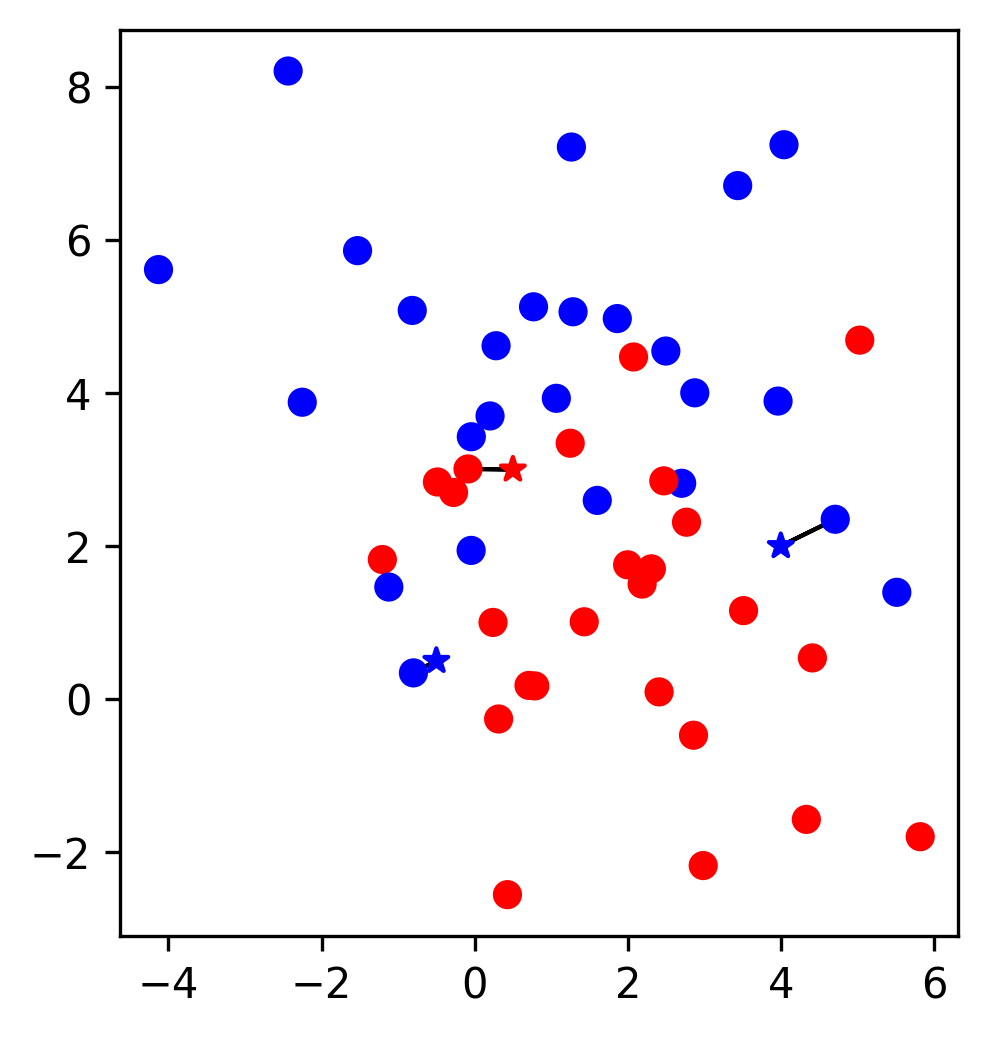

KNN (k=3)

- The label of a new data point is the majority vote of its 3 nearest neighbours

- The decision boundary is less complex and less sensitive to training data

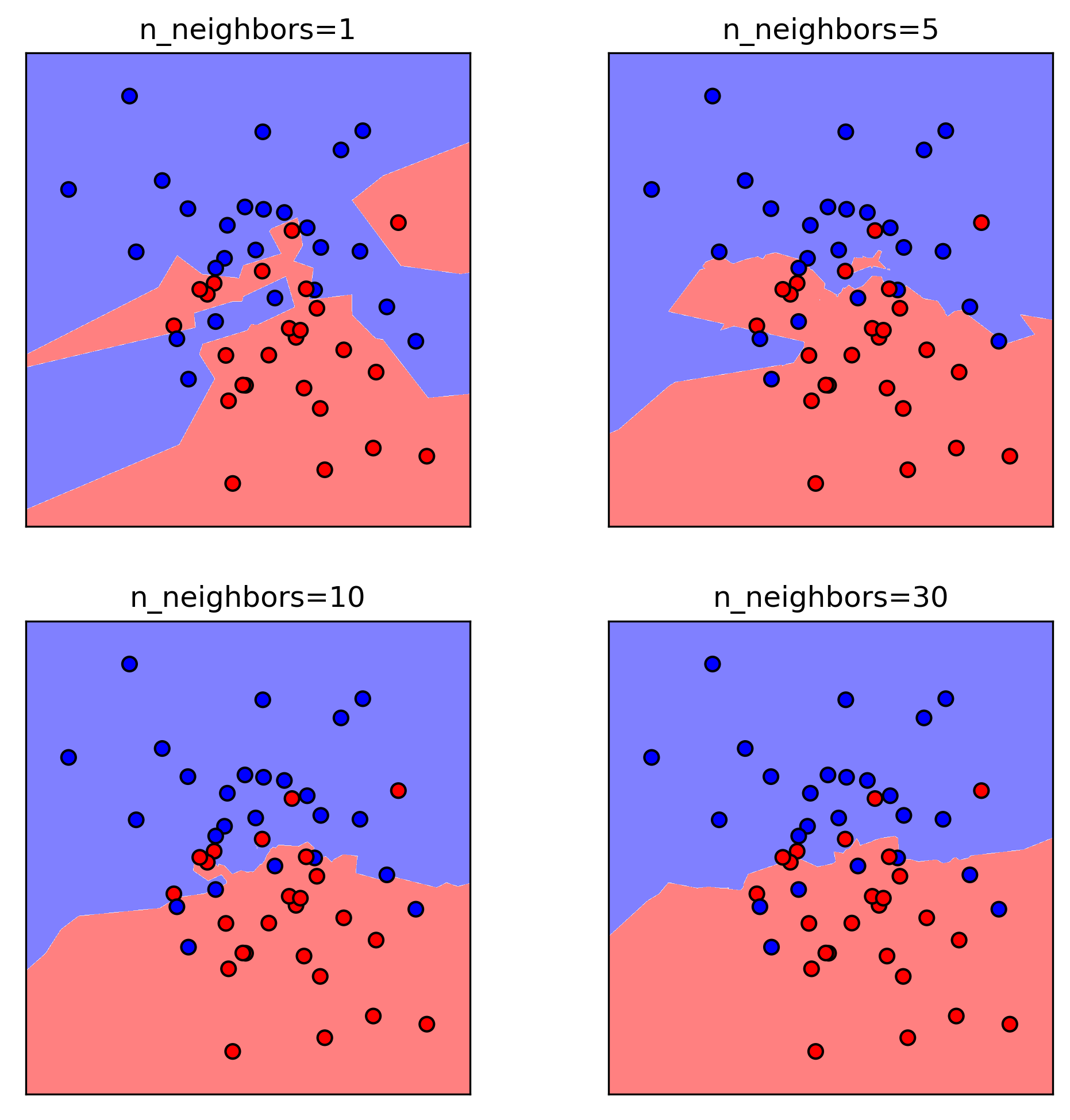

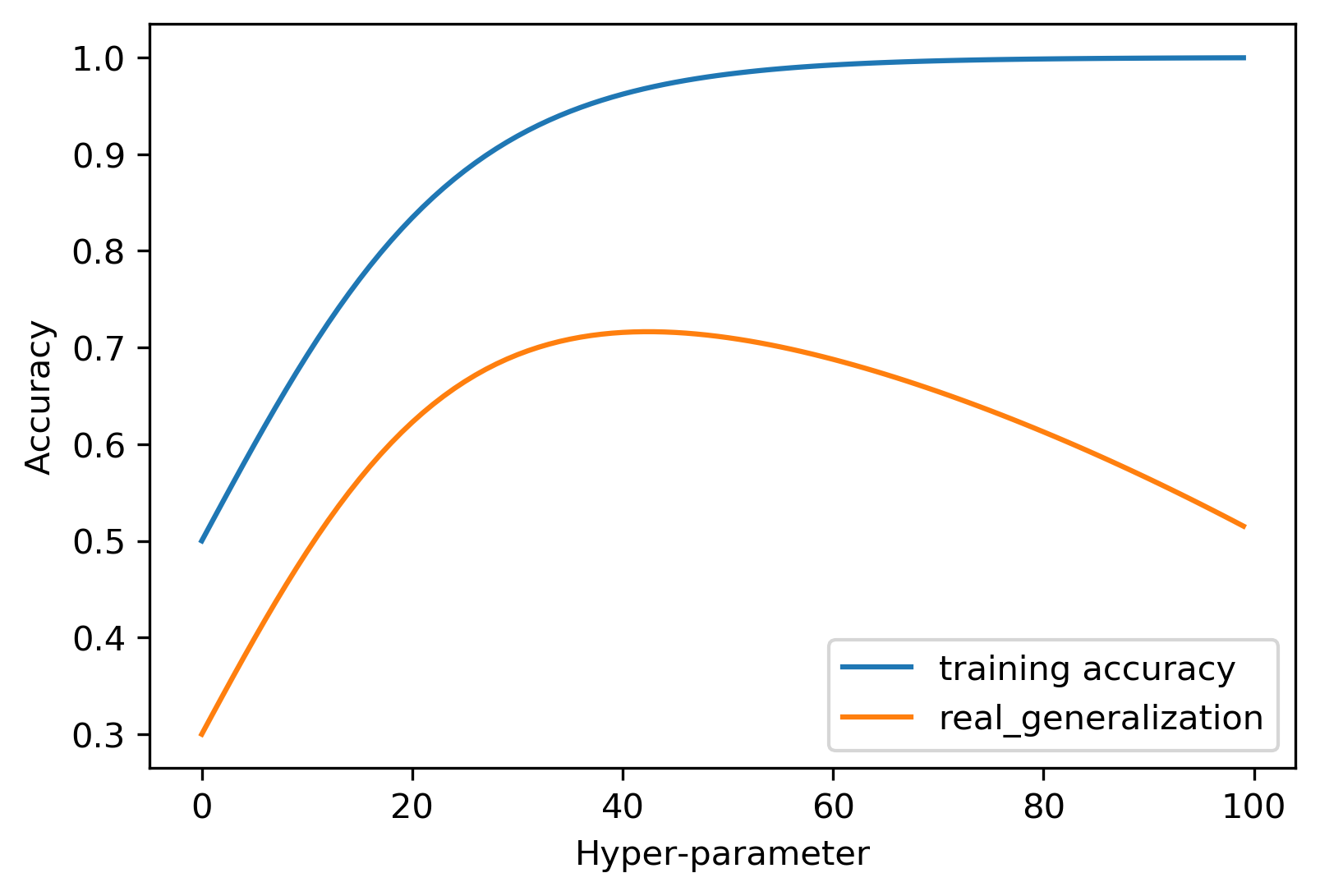

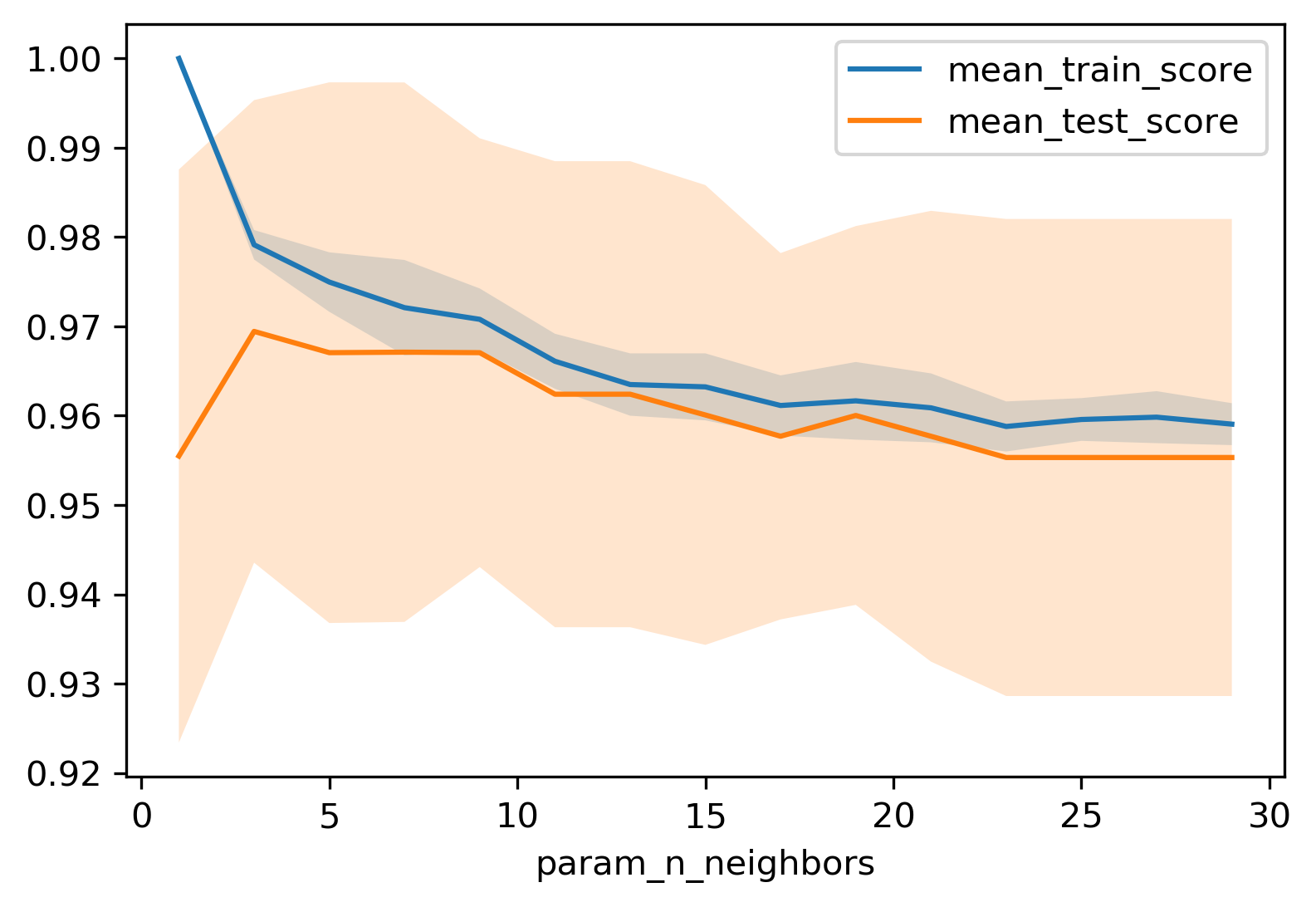

Influence of n_neighbors

- Trade-off between complexity and generalisation

- Smaller k → complex boundary, higher model complexity, lower generalisation

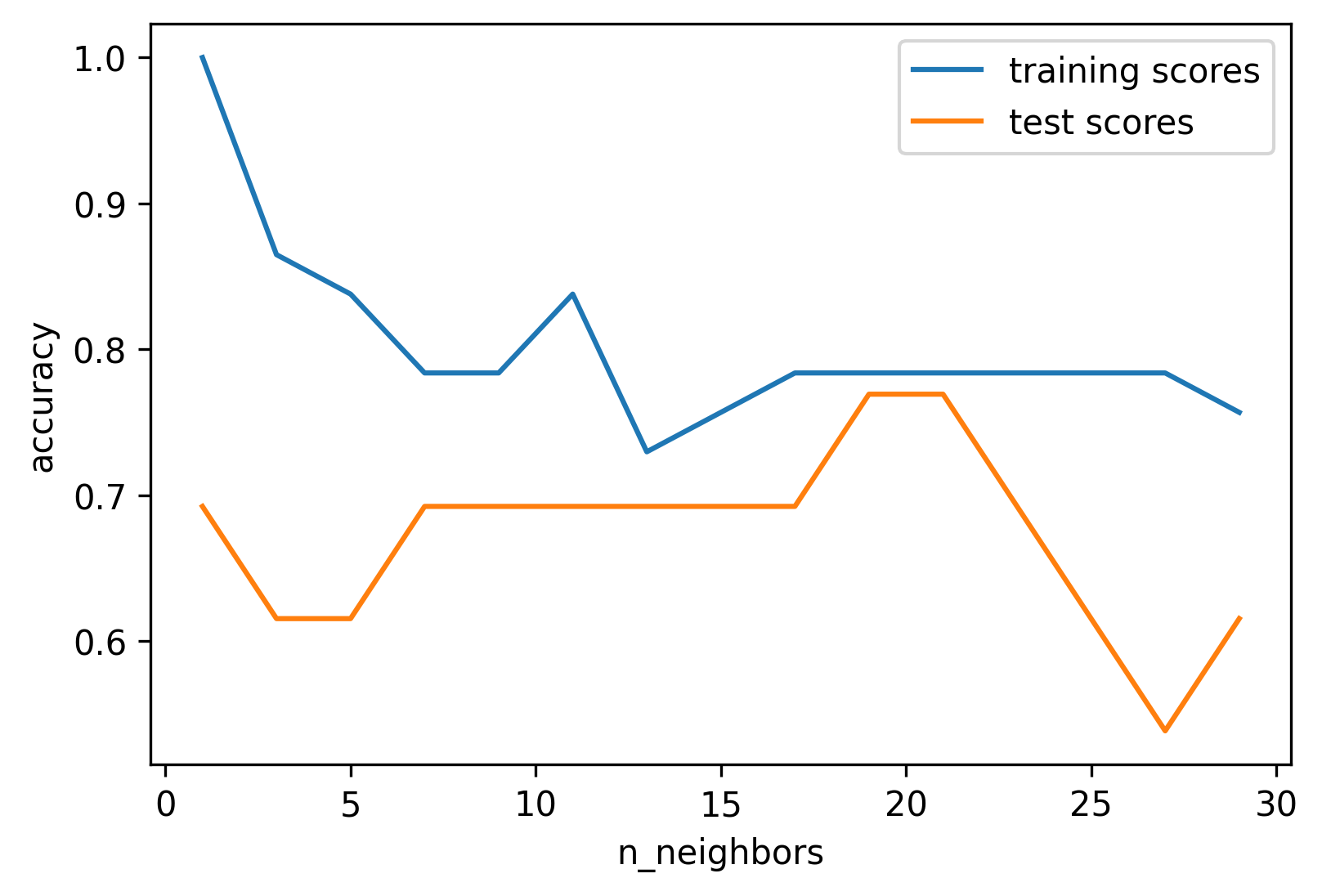

Model Complexity

- Train score ↓ as k increases

- Test score peaks at intermediate k

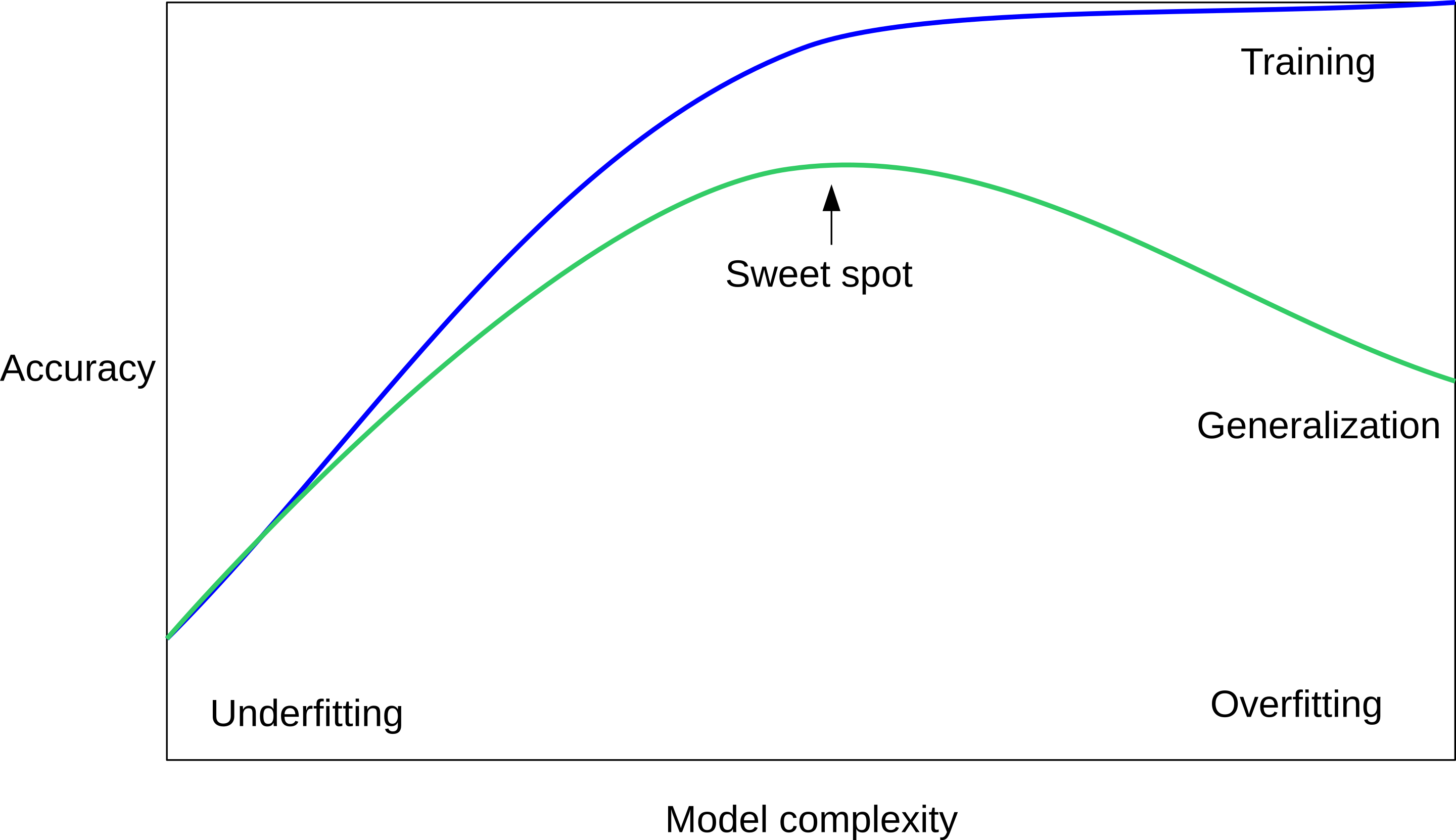

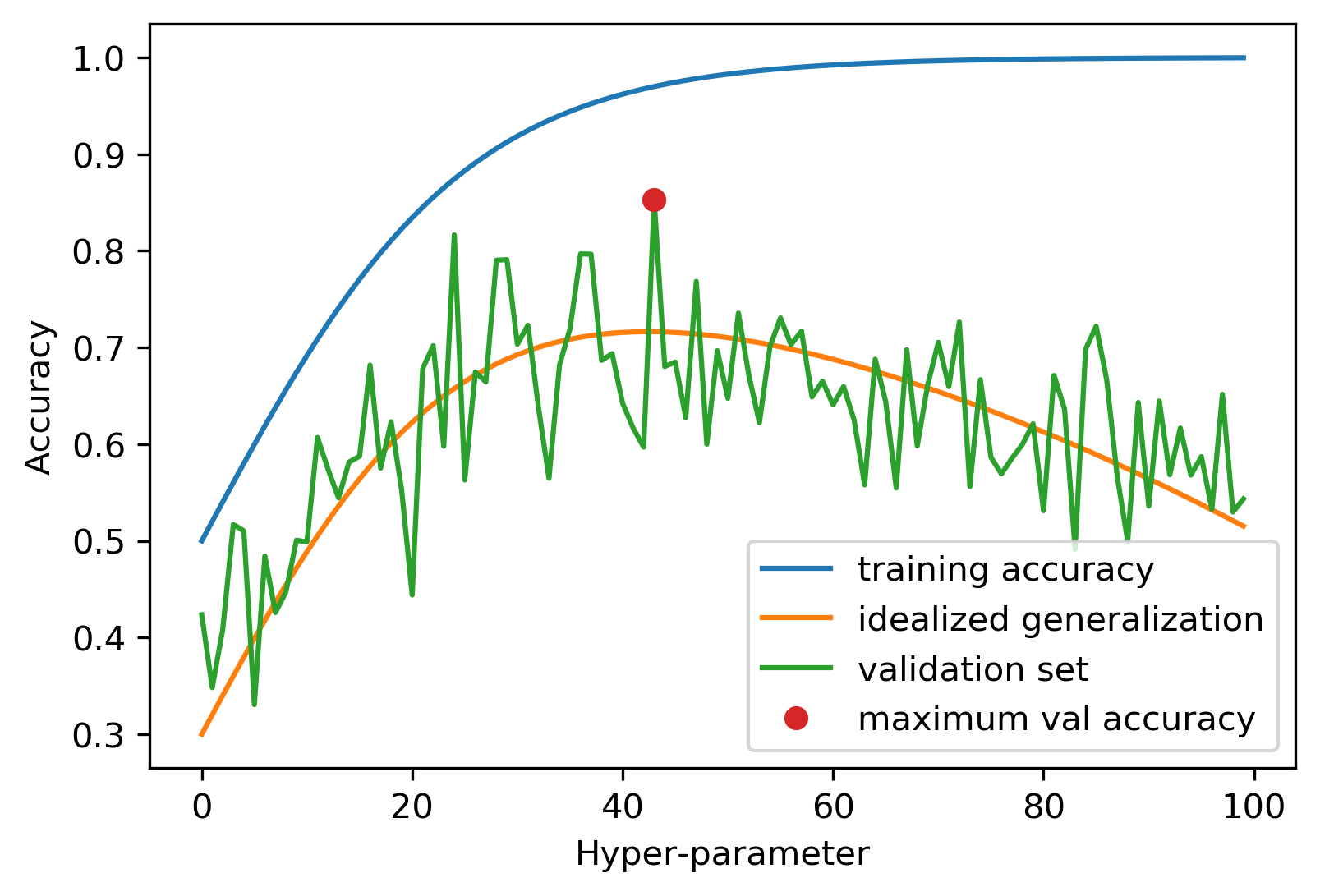

Overfitting vs Underfitting

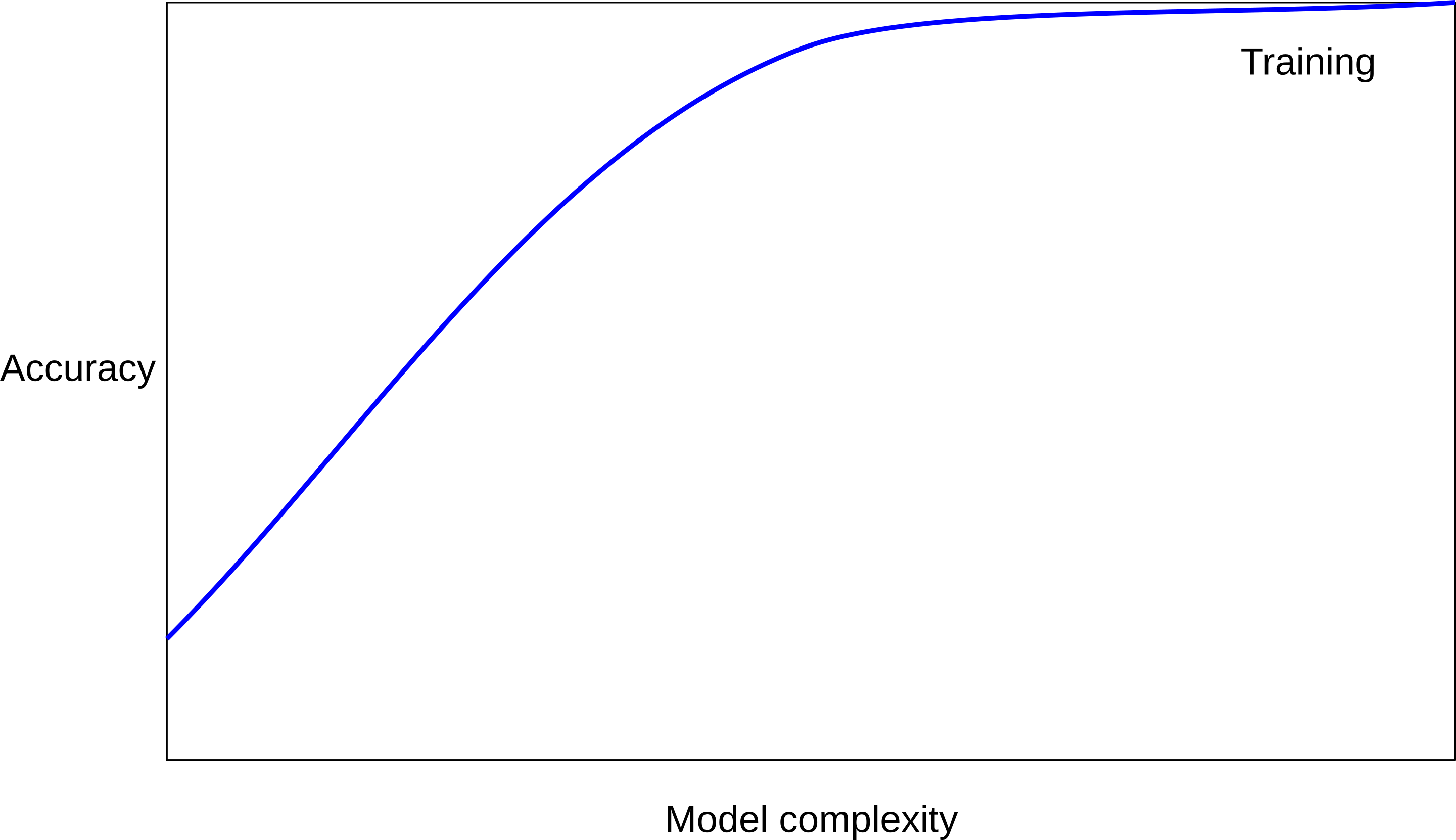

- Performance on training data generally increases with model complexity

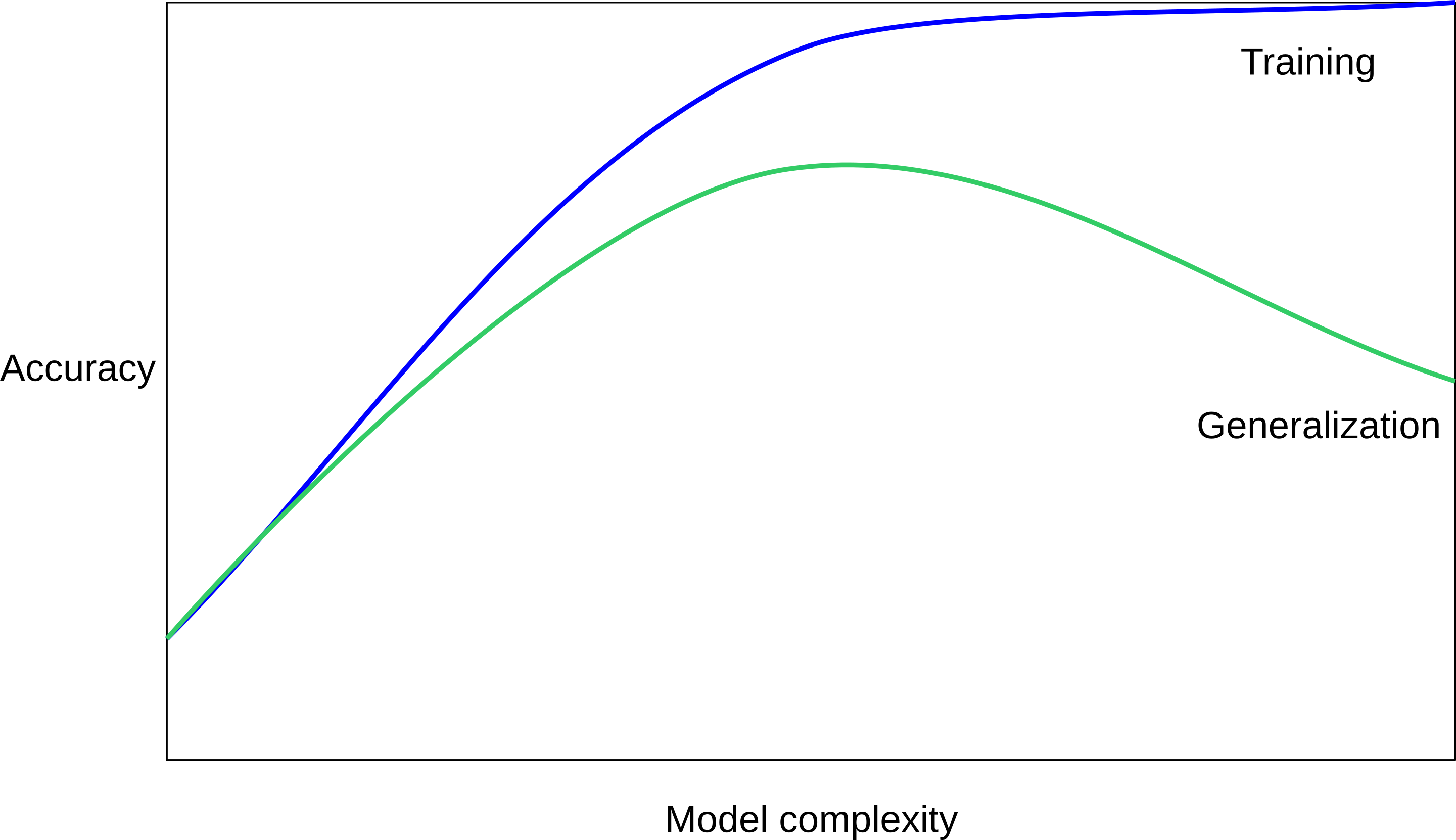

Overfitting vs Underfitting

- Performance on testing data firstly increases with model complexity, and then decreases due to overfitting on training data

Overfitting vs Underfitting

- The optimal hyperparameter achieves balance between underfitting and overfitting

Using test set to select k and evaluate performance. Happy ending?

- Report: best k=19, test accuracy=0.77

- Good for choosing k

- But the testing accuray is overly optimistic and biased

- Problem: test set is used twice, for both choosing k and final evaluation

Summary of train-test split

- The idea is to train the model on the training set, and evaluate it on the test set.

- But, problematic to use the test set for both hyperparameter tuning and final evaluation.

- If we need to choose model hyperparameters, we need a separate validation set (or cross-validation).

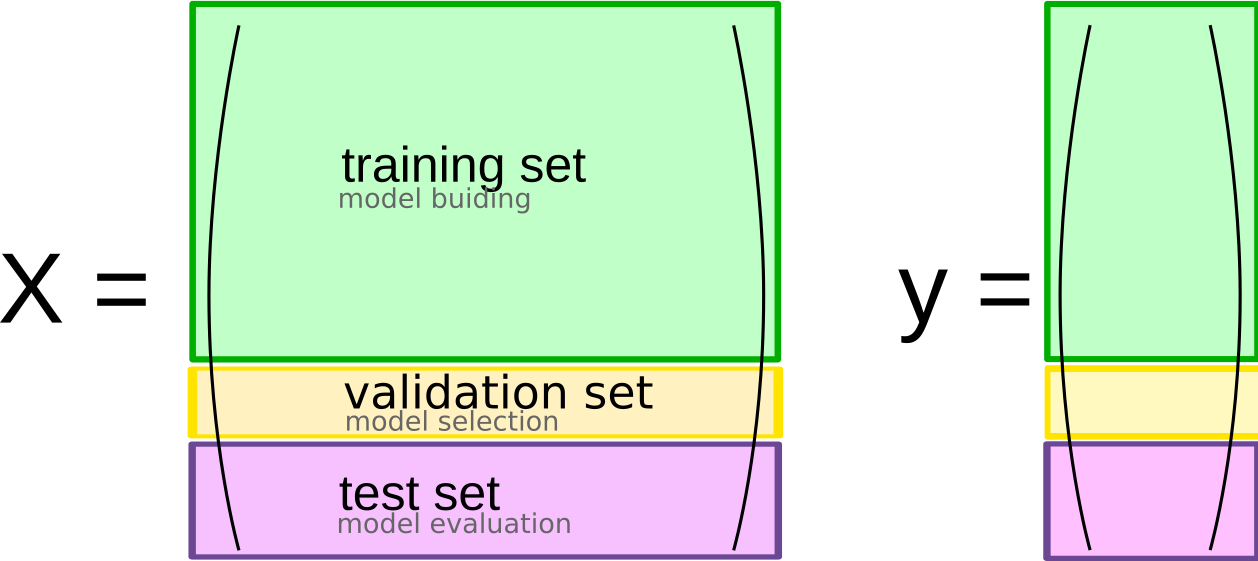

Train-validation-test split

Idea

- Training → Validation (hyperparams tuning) → Test (final eval)

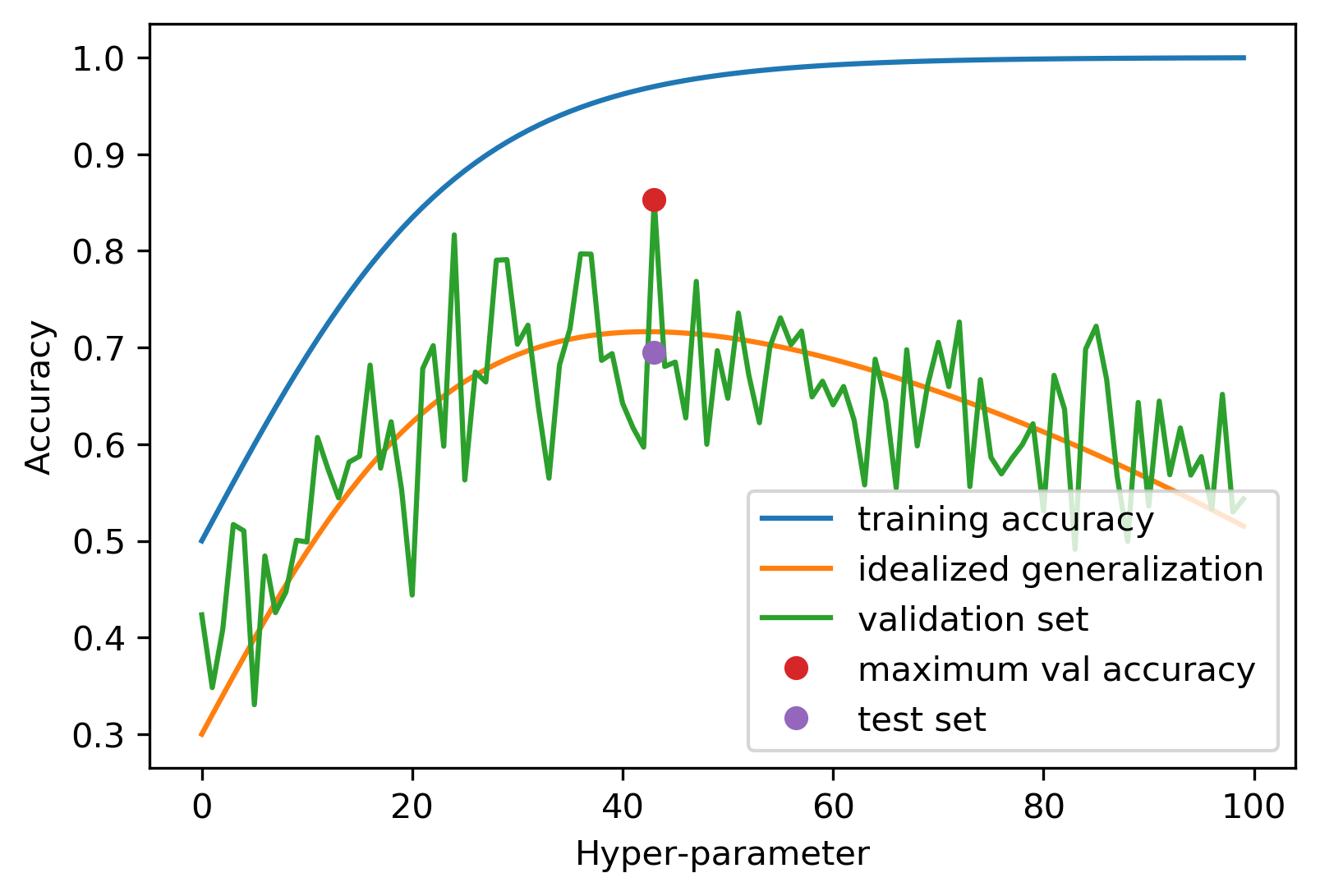

Why using three-hold split?

- It separates hyperparameter tuning (using validation set) and final evaluation (using test set)

- Ensures test set is only used for final evaluation

- Interesting reading: Preventing Overfitting in cross-validation - Ng 1997

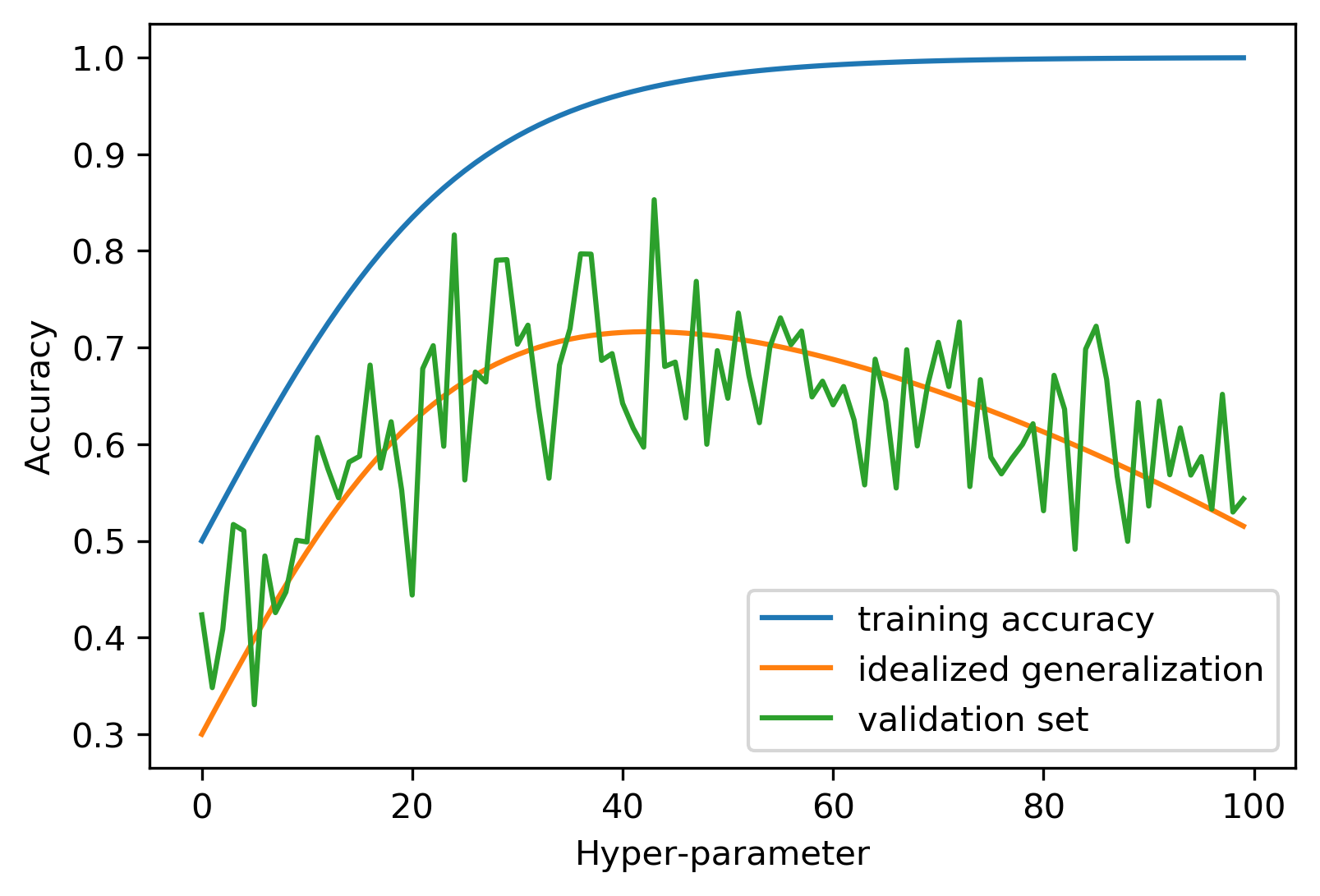

Overfitting the Validation Set

Overfitting the Validation Set

Overfitting the Validation Set

Threefold Split (Code)

X_trainval, X_test, y_trainval, y_test = train_test_split(X, y, random_state=0)

X_train, X_val, y_train, y_val = train_test_split(X_trainval, y_trainval, random_state=0)

val_scores = []

neighbors = np.arange(1, 15, 2)

for i in neighbors:

knn = KNeighborsClassifier(n_neighbors=i)

knn.fit(X_train, y_train)

val_scores.append(knn.score(X_val, y_val))

print(f"best validation score: {np.max(val_scores):.3}\n")

best_n_neighbors = neighbors[np.argmax(val_scores)]

print(f"best n_neighbors:{best_n_neighbors}\n")

knn = KNeighborsClassifier(n_neighbors=best_n_neighbors)

knn.fit(X_trainval, y_trainval)

print(f"test-set score: {knn.score(X_test, y_test):.3f}")- best validation score: 0.991

- best n_neighbors: 11

- test-set score: 0.951

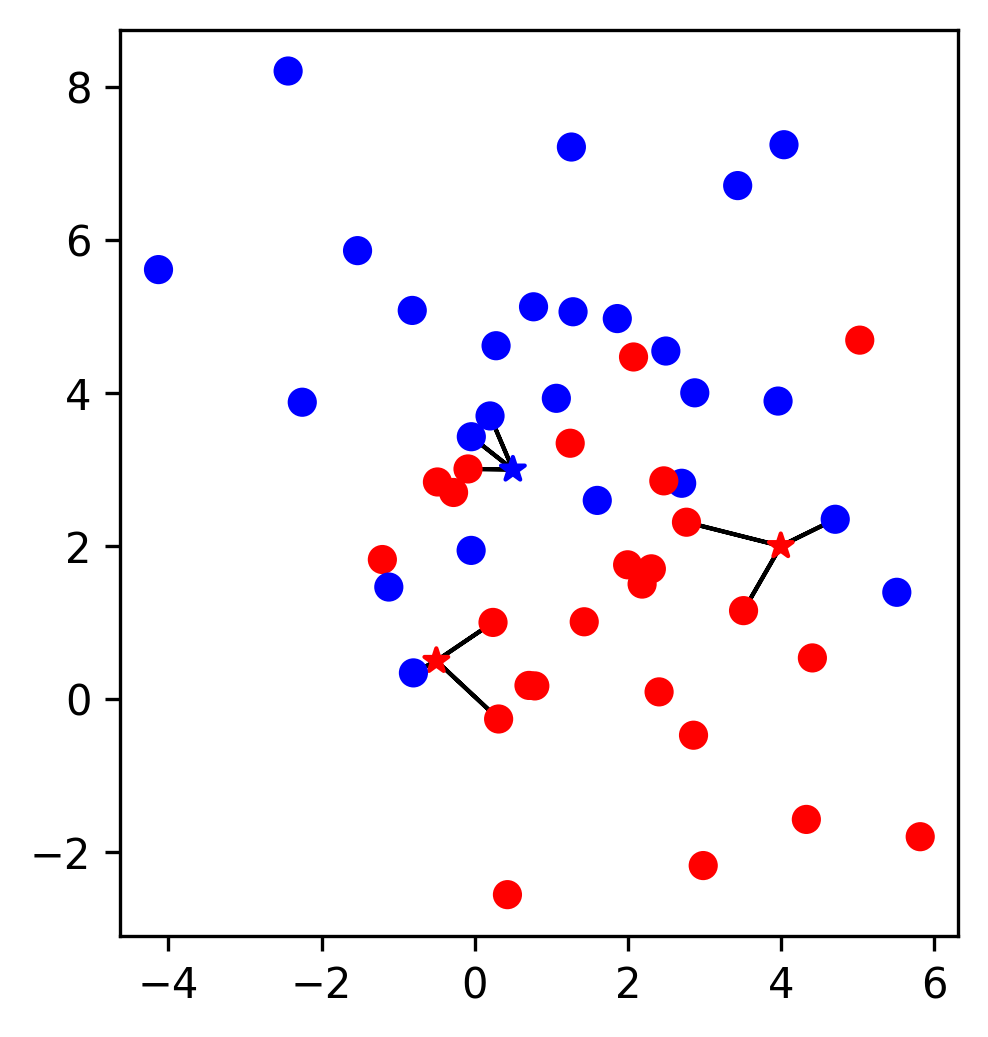

New problem with threefold split

- Fixed train-val-test split: results depend on split

- High variance in best k and test score, not robust

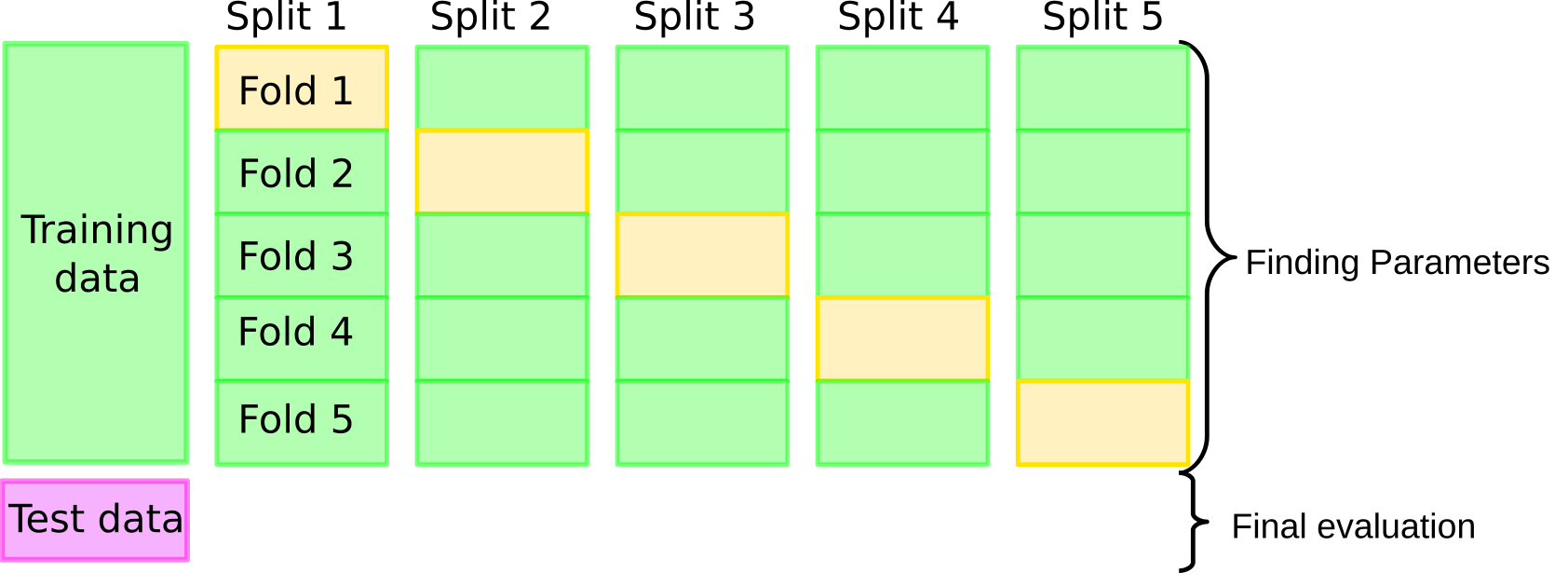

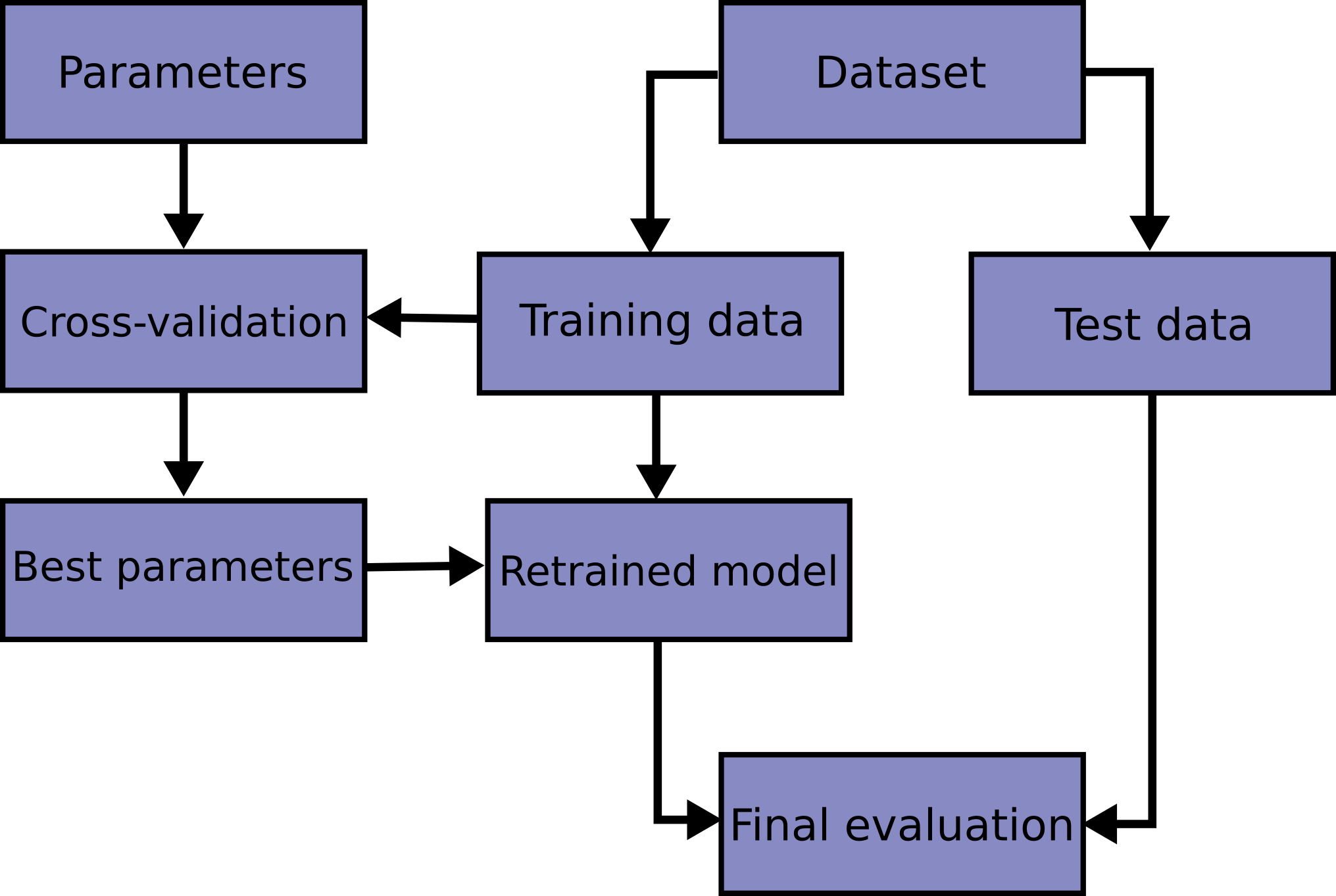

Cross-Validation + Test Set

Idea

Grid-Search with CV (Code)

from sklearn.model_selection import cross_val_score

X_train, X_test, y_train, y_test = train_test_split(X, y)

cross_val_scores = []

for i in neighbors:

knn = KNeighborsClassifier(n_neighbors=i)

scores = cross_val_score(knn, X_train, y_train, cv=10)

cross_val_scores.append(np.mean(scores))

best_n = neighbors[np.argmax(cross_val_scores)]

knn = KNeighborsClassifier(n_neighbors=best_n)

knn.fit(X_train, y_train)

print(f"test-set score: {knn.score(X_test, y_test):.3f}")test-set score: 0.615Grid-Search Workflow

GridSearchCV (Code)

from sklearn.model_selection import GridSearchCV

X_train, X_test, y_train, y_test = train_test_split(X, y, stratify=y)

param_grid = {'n_neighbors': np.arange(1, 30, 2)}

grid = GridSearchCV(KNeighborsClassifier(), param_grid=param_grid, cv=10,

return_train_score=True)

grid.fit(X_train, y_train)

print("best parameters:", grid.best_params_)

print(f"test-set score: {grid.score(X_test, y_test):.3f}")best parameters: {'n_neighbors': np.int64(17)}

test-set score: 0.615GridSearchCV Results

n_neighbors Search Results

How many folds in CV?

- Recommend to run 5-fold (default in sklearn) or 10-fold CV

- #folds represents a trade-off between computing time and performance estimate quality

- More folds → more training data per fold → better generlisation performance estimate, but longer computing time

- Computing time is key for large datasets and complex models.

- Advice: start with train model without CV, get computing time T, then estimate CV time as approximately

T × #folds.

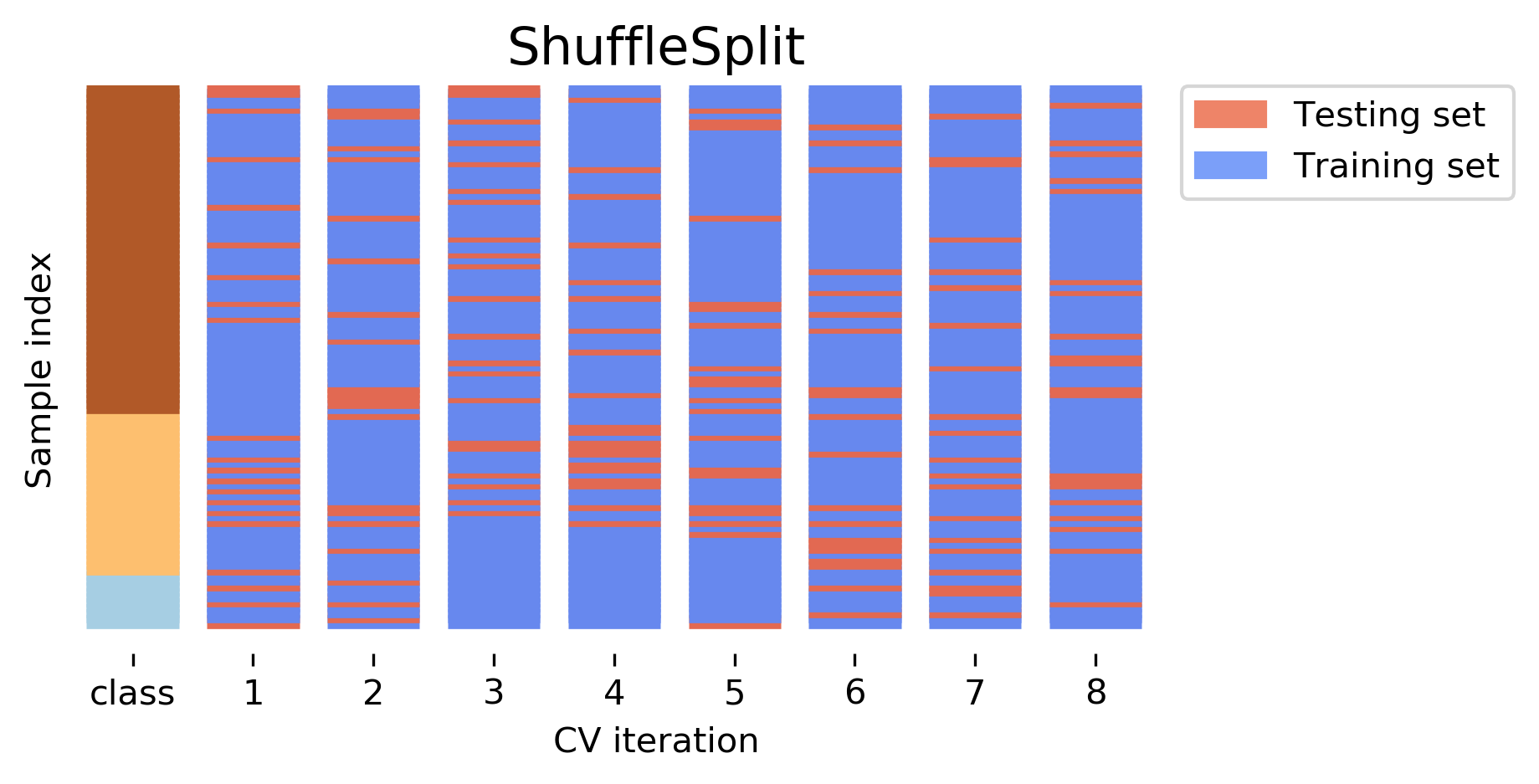

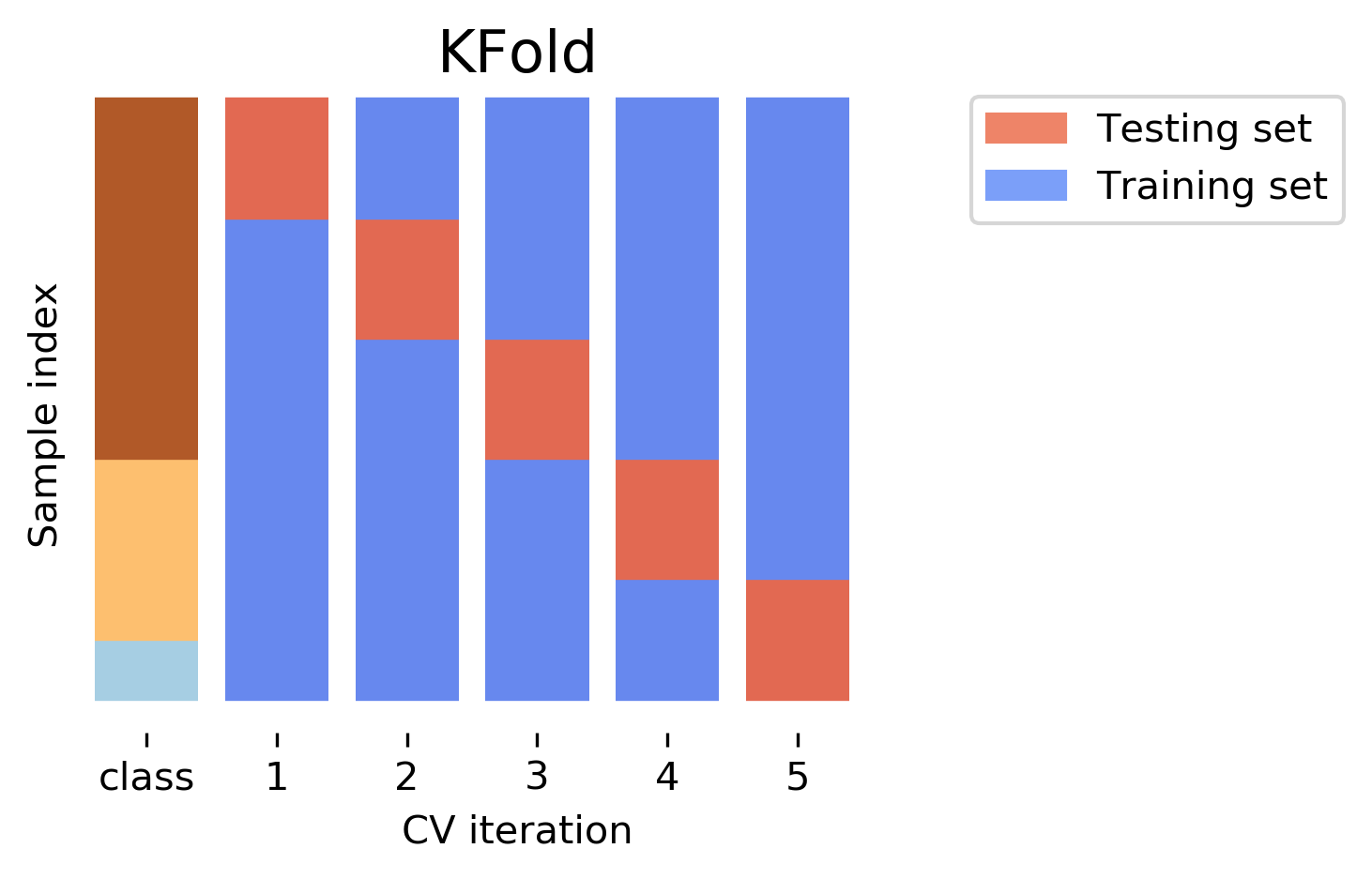

Should data be shuffled in CV?

- In sklearn,

KFolddoes not shuffle data and is subject to data ordering - Recommend to set

shuffle=TrueinKFold, or usingShuffleSplitfunction

CV extensions

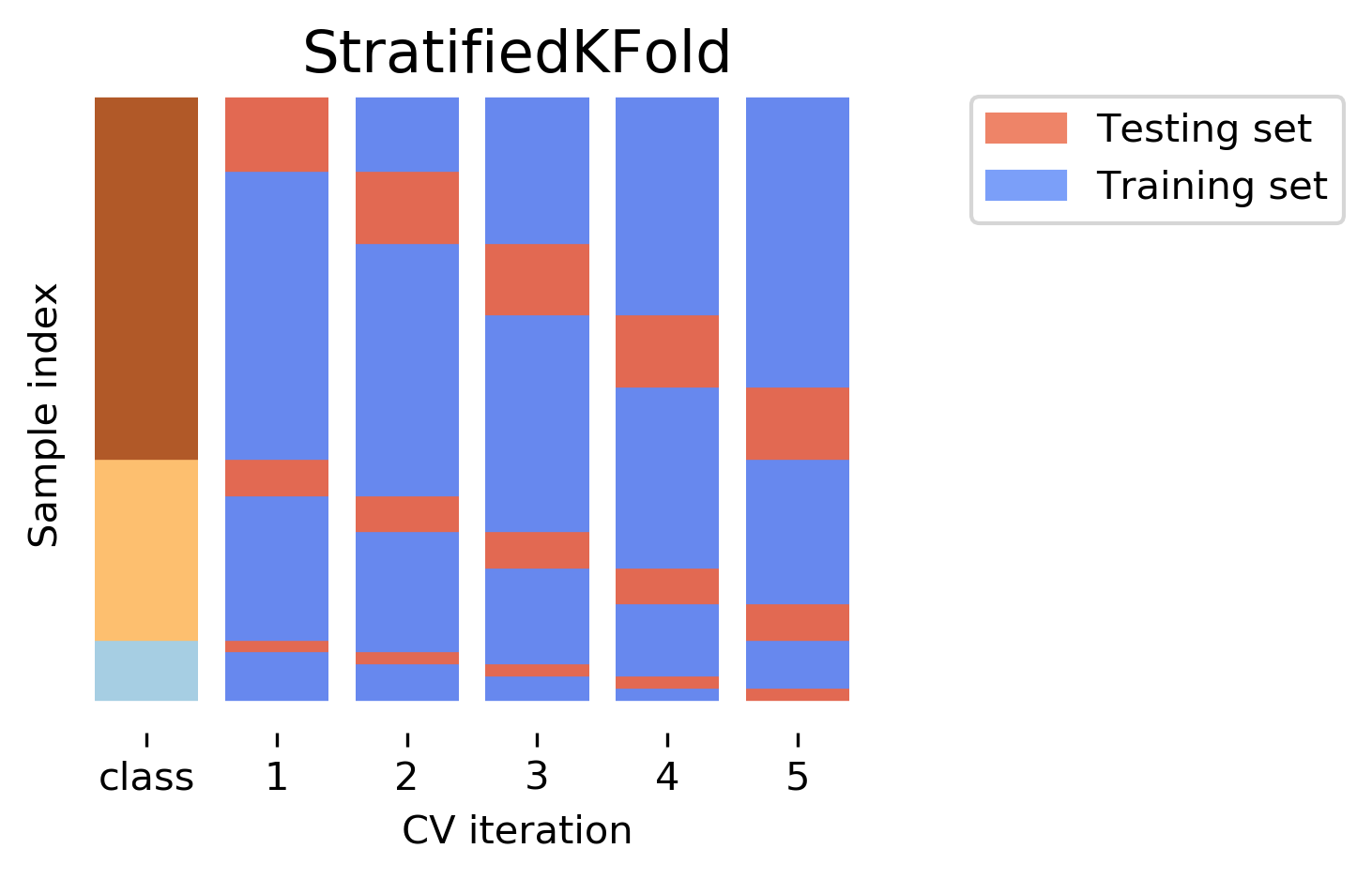

- StratifiedKFold: for multiclass classification or imbalanced data

- GroupKFold: for grouped data

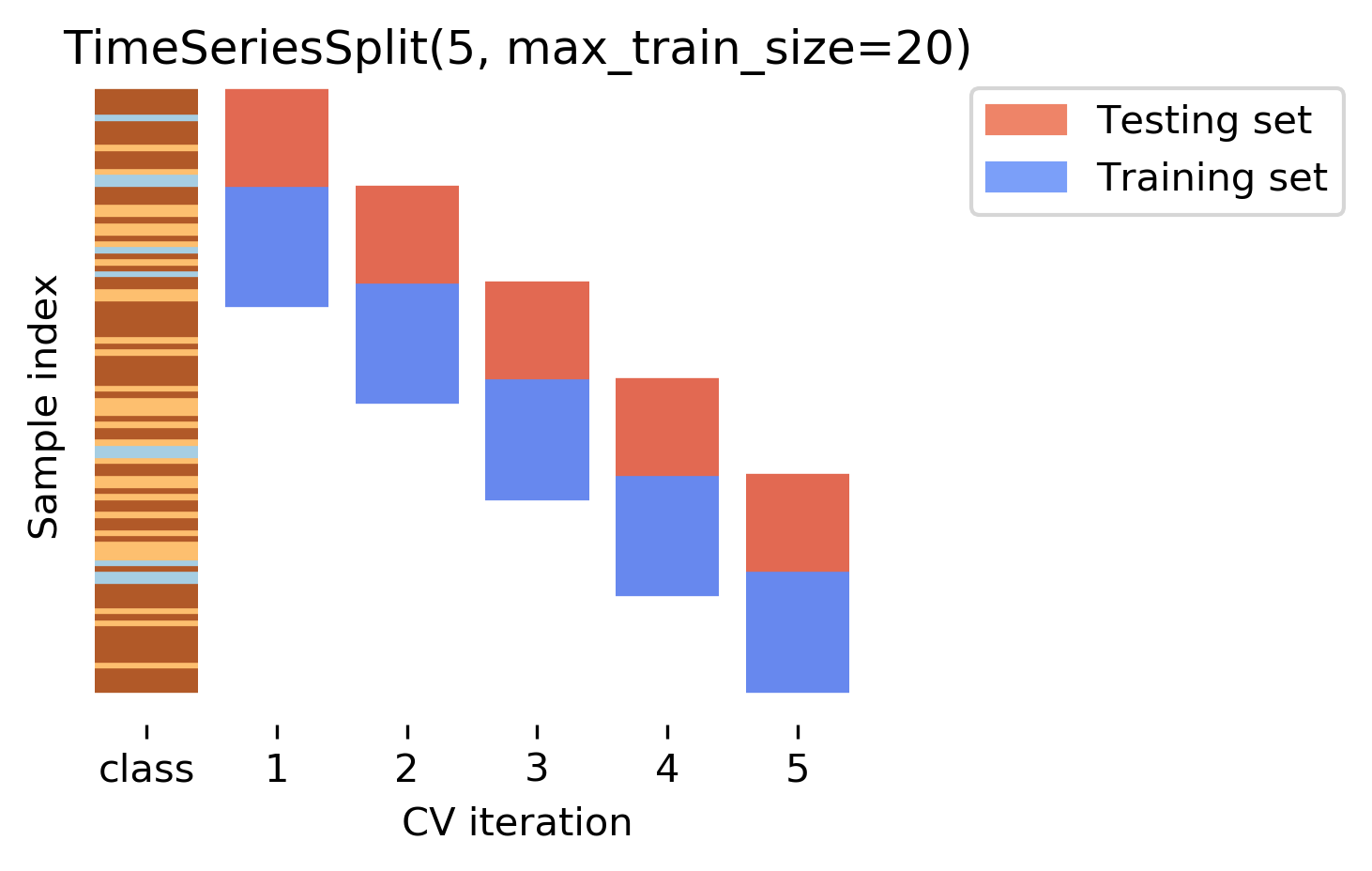

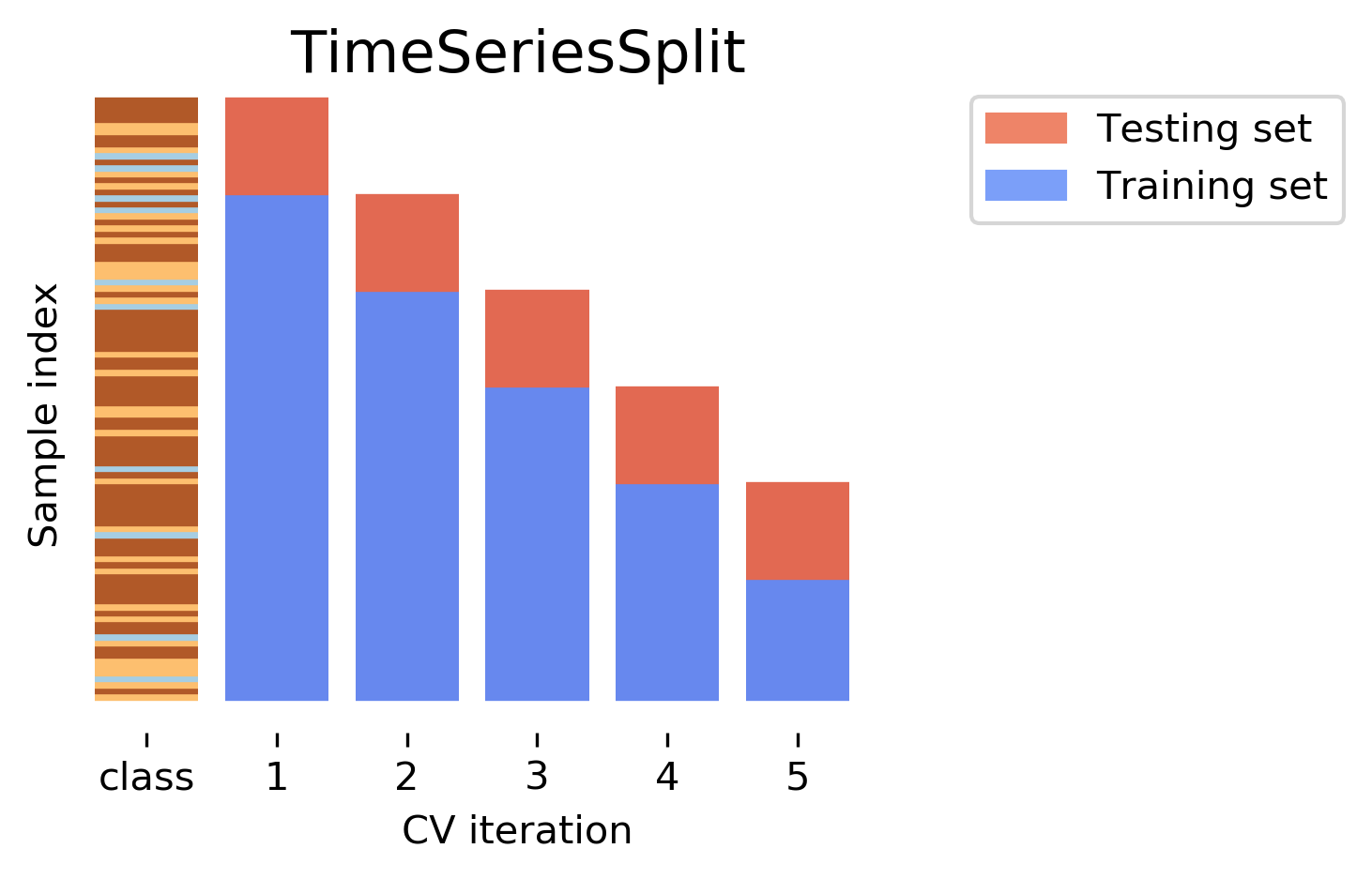

- TimeSeriesSplit: for time series data

Stratified CV

- Standard CV leads to folds with different class distributions

Stratified CV: for multiclass classification or imbalanced data

- Preserve class distribution in each fold

Stratified CV (Code)

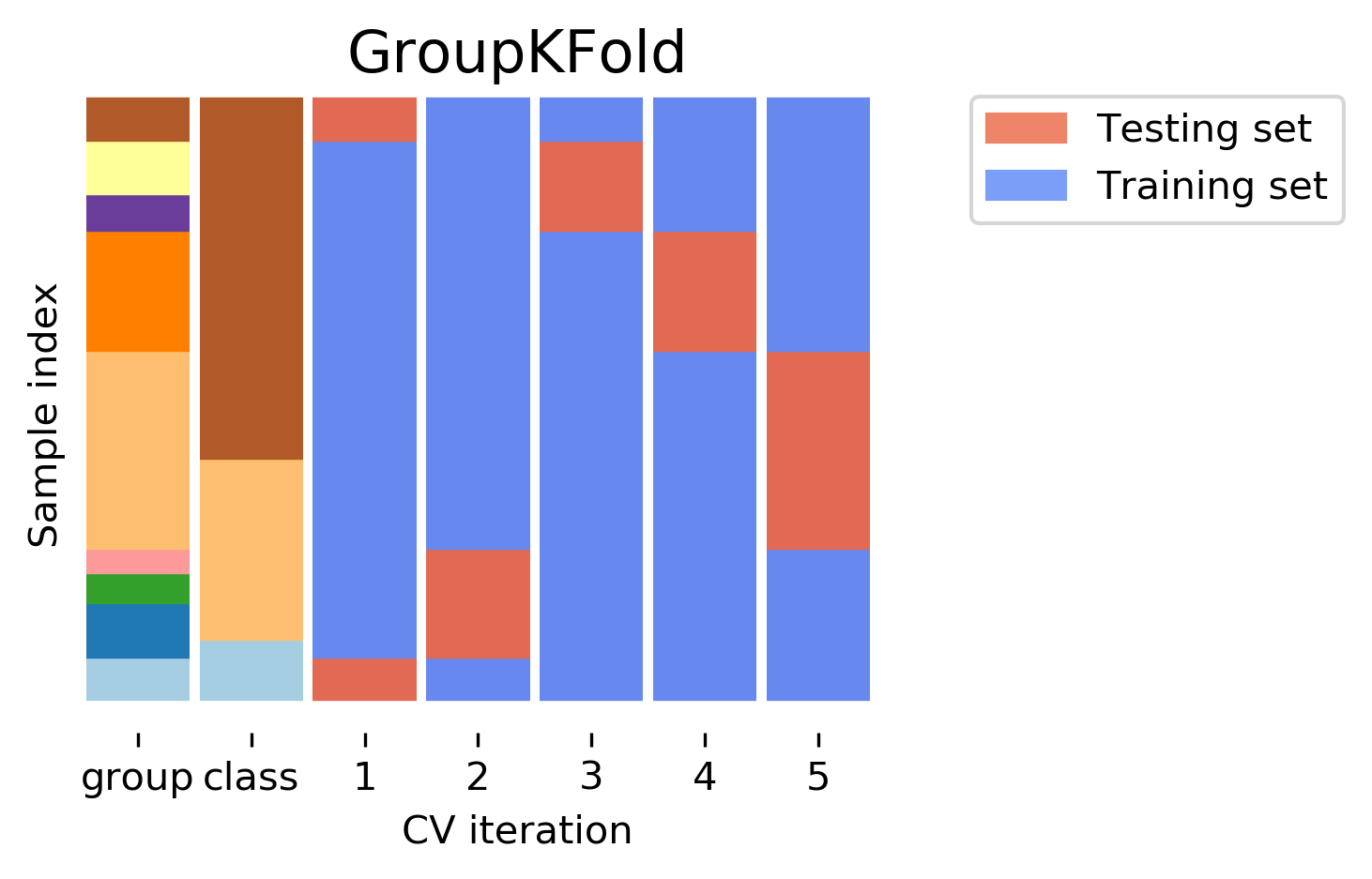

CV for grouped data?

- CV is more complicated when data are grouped

- Data points within a group are correlated (e.g., city, patient, user)

- e.g. The task is to if a patient has a disease based on medical records from 9 cities

- How CV should be done depends on the application scenario

Scenario 1: To predict new data from any existing cities (same distribution as training data)

- Standard CV (e.g. KFold, RepeatedKFold) can be used

- Group information can be ignored

Scenario 2: To predict new data from unknown cities (not i.i.d.)

- GroupKFold should be used; ensure each group is contained in exactly one fold (either train or test)

- Data from 9 cities; 5-fold CV; GroupKFold as below.

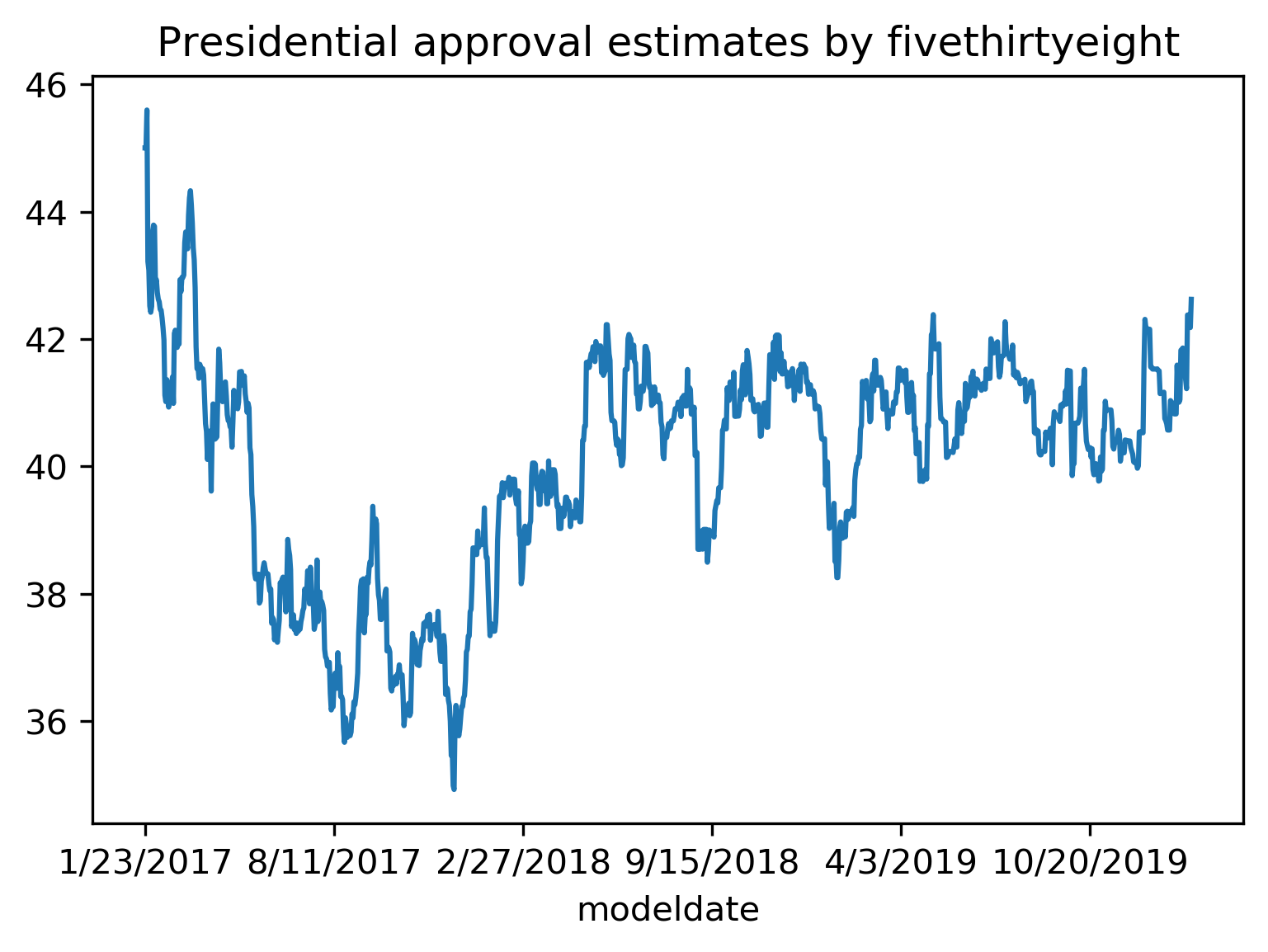

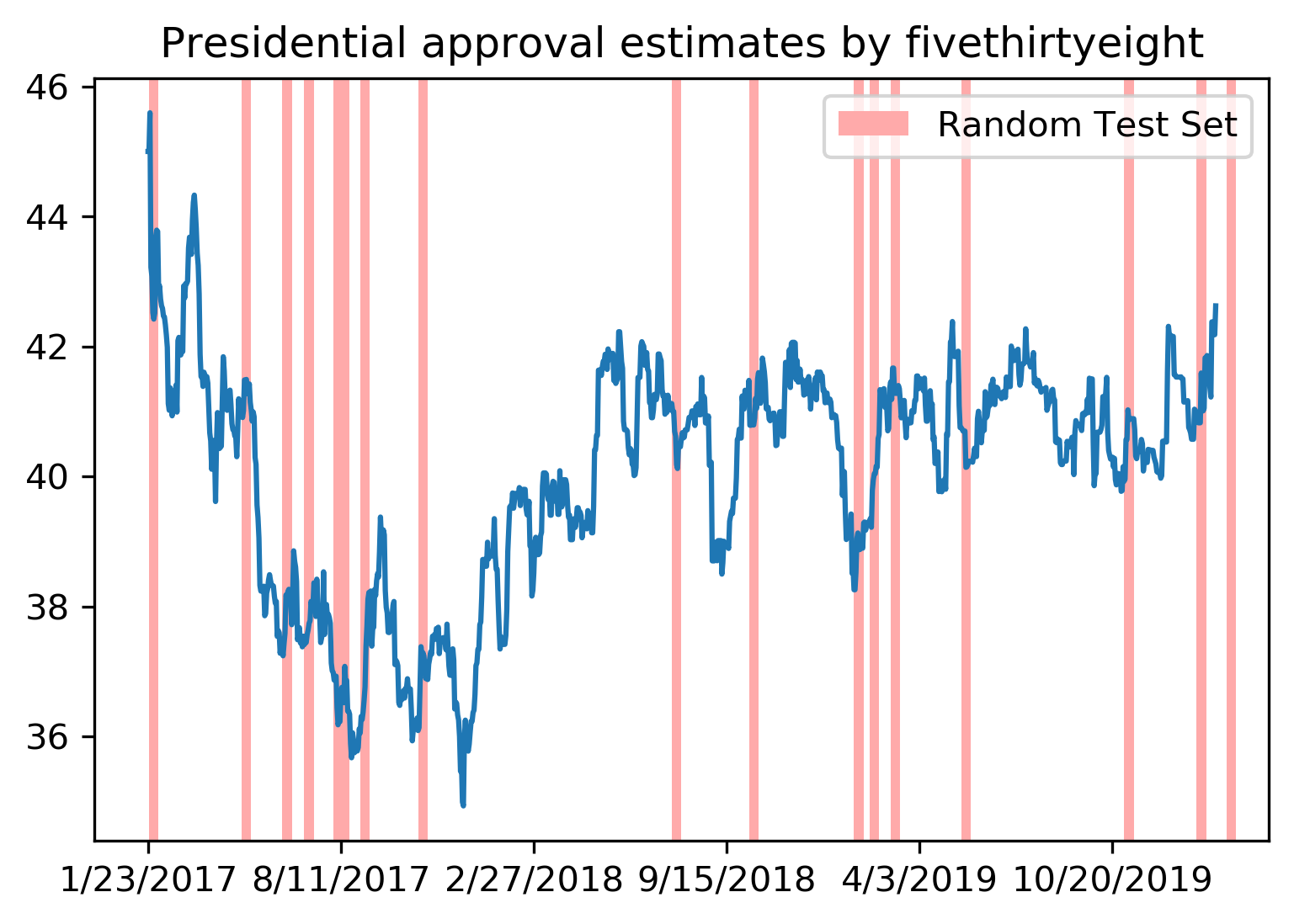

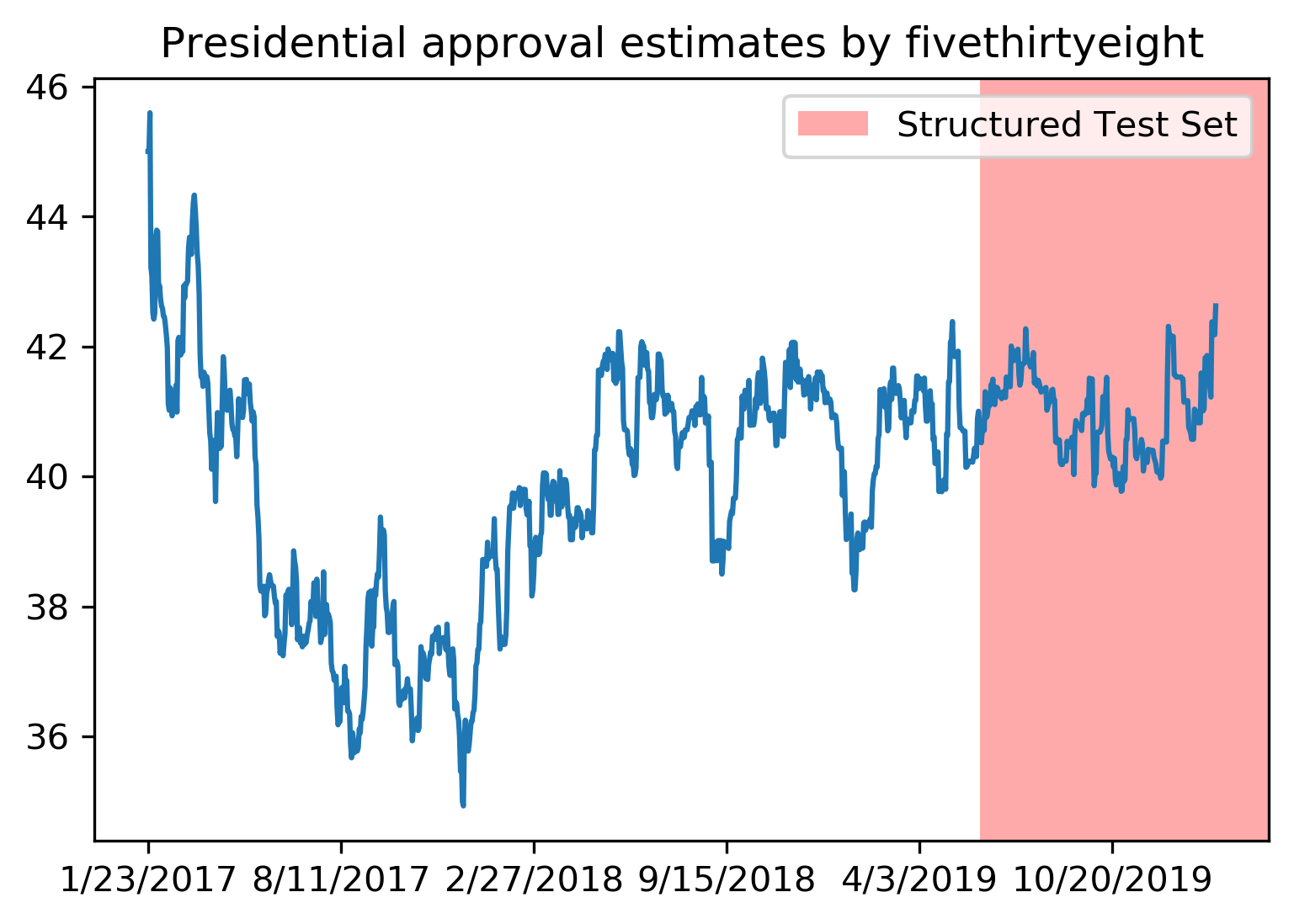

CV for time series?

- Data is collected over time and is not i.i.d.; future data depends on past data

Standard train-test split or CV not suitable for time series

Train-Test Split for Time Series

- Using past data to train, future data to test

TimeSeriesSplit

Time Series CV

Overview

We’ve covered:

- Train-test split, threefold split (not recommended)

- Cross-validation and test set is recommended

- In cross-validation, recommended to use 5-fold or 10-fold CV with shuffling

- Stratified CV (StratifiedKFold) for multiple classes and imbalanced data

- CV for grouped data and time series data

Questions?

© CASA | ucl.ac.uk/bartlett/casa